AI and Information Quality: How Generative Systems Are Changing What We Trust and Why It Matters

Generative AI is reshaping how people encounter facts, news, and creative work. This evidence-focused guide explains what’s changing in information quality, what people report as benefits, documented risks (with sources), who is most affected, and practical steps readers can take to evaluate and use AI responsibly.

Responsible AI Operations: Governance That Works — Practical Guidance for Compliance and Oversight

A neutral, evidence-based guide to Responsible AI operations that summarizes international standards, regulator guidance (EU, UK, US, Singapore), and practical controls organisations can implement. Focused on governance, documentation, risk management, and realistic compliance steps — not legal advice.

How to Choose LLM Platforms in 2026: Practical Criteria, Trade-offs, and Alternatives

An evidence-based guide to evaluating LLM platforms in 2026. This article explains what LLM platforms do (and don’t), key features and limitations, pricing and security considerations, reliability pitfalls, and when to pick alternative solutions. Includes vendor facts and third-party benchmark context to help procurement and engineering teams make realistic comparisons.

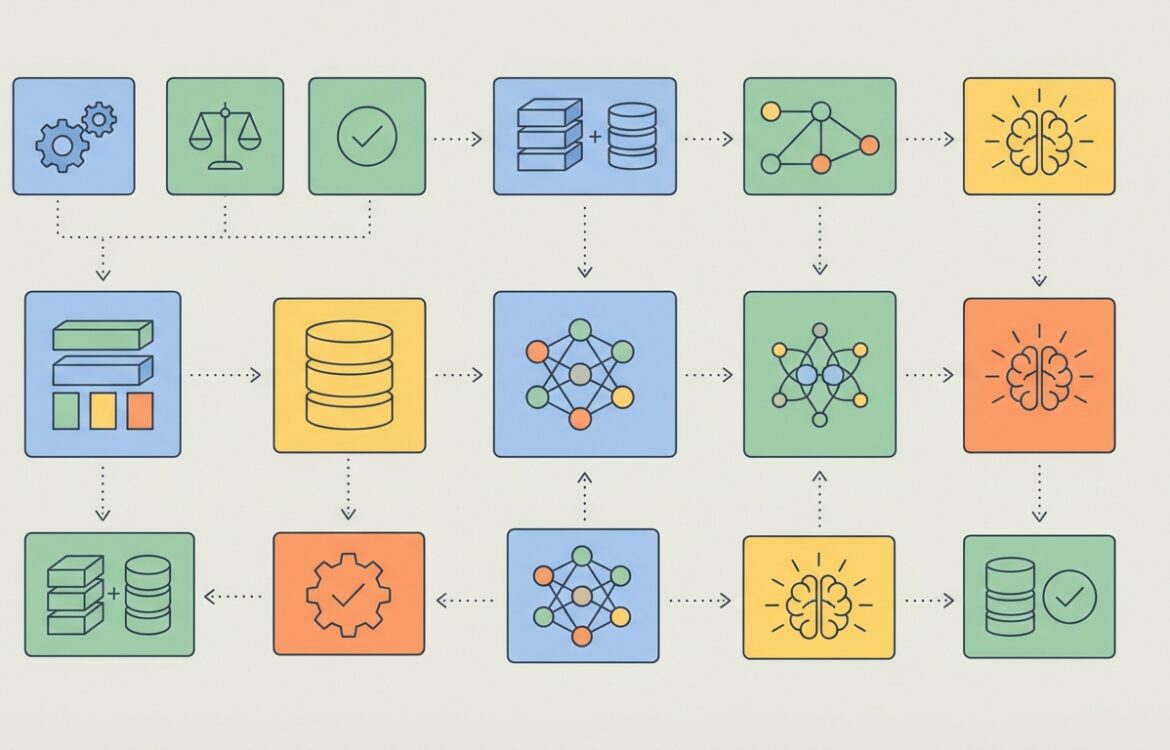

RAG tooling: Search + LLM Done Right — Practical review and comparison

A practical, evidence-based review of RAG tooling (search + LLM) for engineers and product teams. This article explains what RAG tooling delivers, key features and limitations of major options (Pinecone, Weaviate, Chroma, Qdrant, Milvus), pricing and compliance trade-offs, reliability pitfalls, and when to pick alternatives. Sources and changelogs cited.

Privacy for AI Products: A Practical Guide for Developers and Compliance Teams

A neutral, evidence-based practical guide to privacy considerations when building, deploying, or buying AI products. Covers definitions, what regulators in the EU, UK and U.S. say, DPIAs/ADMT obligations, practical controls (data minimisation, logging, model governance, differential privacy), common misconceptions, and open questions for product teams and privacy officers. This is informational, not legal advice.

Copyright and AI: What Creators Should Know — A Practical Guide for Creators

An evidence‑based overview for creators on how copyright intersects with generative AI. Covers definitions, major regulatory developments and cases in the US, EU and UK, practical steps creators and small teams can take to reduce legal and reputational risk, common misconceptions, and open questions that may change how works are used to train and deploy AI.

Open vs Closed AI: Mapping the Competitive Landscape and Practical Trade‑offs

This evidence‑led analysis examines the competitive dynamics between open and closed AI approaches. It separates verified signals—model releases, enterprise adoption, API gating, and cost trends—from areas that remain uncertain, and offers practical implications and watch‑list metrics for teams and decision makers.

Humans and AI: Social and Psychological Effects — What the Evidence Says About Work, Learning, Creativity and Relationships

This evidence-aware overview examines how humans and AI interact across work, education, media, creativity and relationships. It summarizes observable signals, reported benefits, documented risks, who is affected, and practical guidance based on research and reputable surveys.

Securing LLM Apps: Practical Threat Modeling for RAG, Fine‑Tuning, and Deployment

A practical, implementation‑focused guide to threat modeling Large Language Model (LLM) applications. Covers attack classes (prompt injection, model extraction, data poisoning), RAG/vector DB considerations, fine‑tuning risks, mitigations (access control, DP, monitoring), testing and red‑teaming, and common implementation mistakes—grounded in published research, vendor guidance, and standards.

LLM Evaluation Tools: How to Measure What Matters When Comparing Evaluation Frameworks

A neutral, evidence-based guide to key LLM evaluation tools, what they actually measure, trade-offs, pricing and data/privacy considerations. Covers OpenAI Evals, LM‑Eval‑Harness, Hugging Face Evaluate, HELM and practical alternatives so teams can choose the right evaluation approach for real-world LLM development.

Archives

Calendar

| M | T | W | T | F | S | S |

|---|---|---|---|---|---|---|

| 1 | ||||||

| 2 | 3 | 4 | 5 | 6 | 7 | 8 |

| 9 | 10 | 11 | 12 | 13 | 14 | 15 |

| 16 | 17 | 18 | 19 | 20 | 21 | 22 |

| 23 | 24 | 25 | 26 | 27 | 28 | 29 |

| 30 | 31 | |||||