Author: Sofia Alvarez

Safety and Misuse: Deepfakes, Fraud, and Abuse — An Evidence-Based Compliance Guide

A neutral, evidence-based overview of risks, regulatory responses, and practical compliance steps for Safety and Misuse: Deepfakes, Fraud, and Abuse. Covers definitions, detection limitations, U.S./EU/UK approaches, recent federal and state measures, and recommended controls for organizations handling synthetic media risks.

The EU AI Act: Practical Compliance Guide for Providers and Deployers

A neutral, evidence-based practical guide to complying with the EU AI Act: Practical Compliance Guide outlines scope, high‑risk classification, conformity assessment, registration and documentation duties, timelines, and practical steps organizations can take to prepare without offering legal advice.

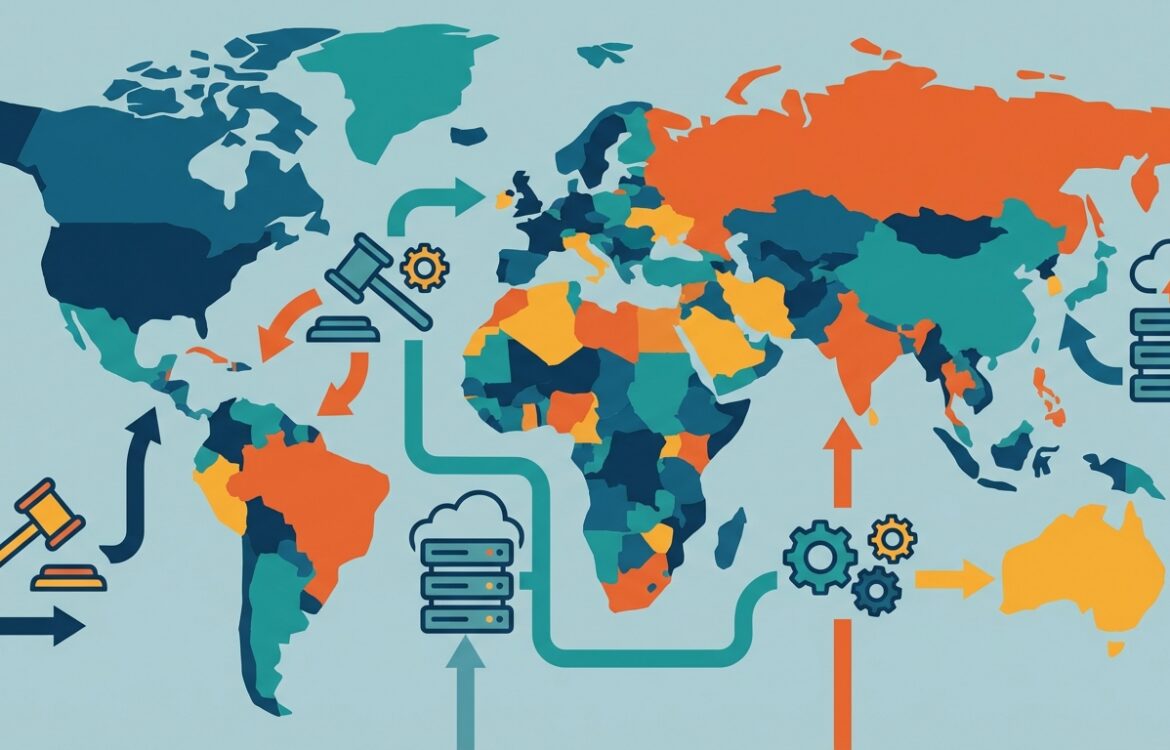

AI governance trends: 2026 and beyond — an evidence-led outlook on regulation, standards, and operational risk

This analytical review examines AI governance trends for 2026 and beyond, separating verified developments (regulatory timelines, standards work, government guidance) from areas of uncertainty (enforcement detail, geopolitical fragmentation). It synthesizes official sources and high-trust reporting to help teams, creators, and policymakers prepare for near-term governance shifts.

Responsible AI Operations: Governance That Works — Practical Guidance for Compliance and Oversight

A neutral, evidence-based guide to Responsible AI operations that summarizes international standards, regulator guidance (EU, UK, US, Singapore), and practical controls organisations can implement. Focused on governance, documentation, risk management, and realistic compliance steps — not legal advice.

Privacy for AI Products: A Practical Guide for Developers and Compliance Teams

A neutral, evidence-based practical guide to privacy considerations when building, deploying, or buying AI products. Covers definitions, what regulators in the EU, UK and U.S. say, DPIAs/ADMT obligations, practical controls (data minimisation, logging, model governance, differential privacy), common misconceptions, and open questions for product teams and privacy officers. This is informational, not legal advice.

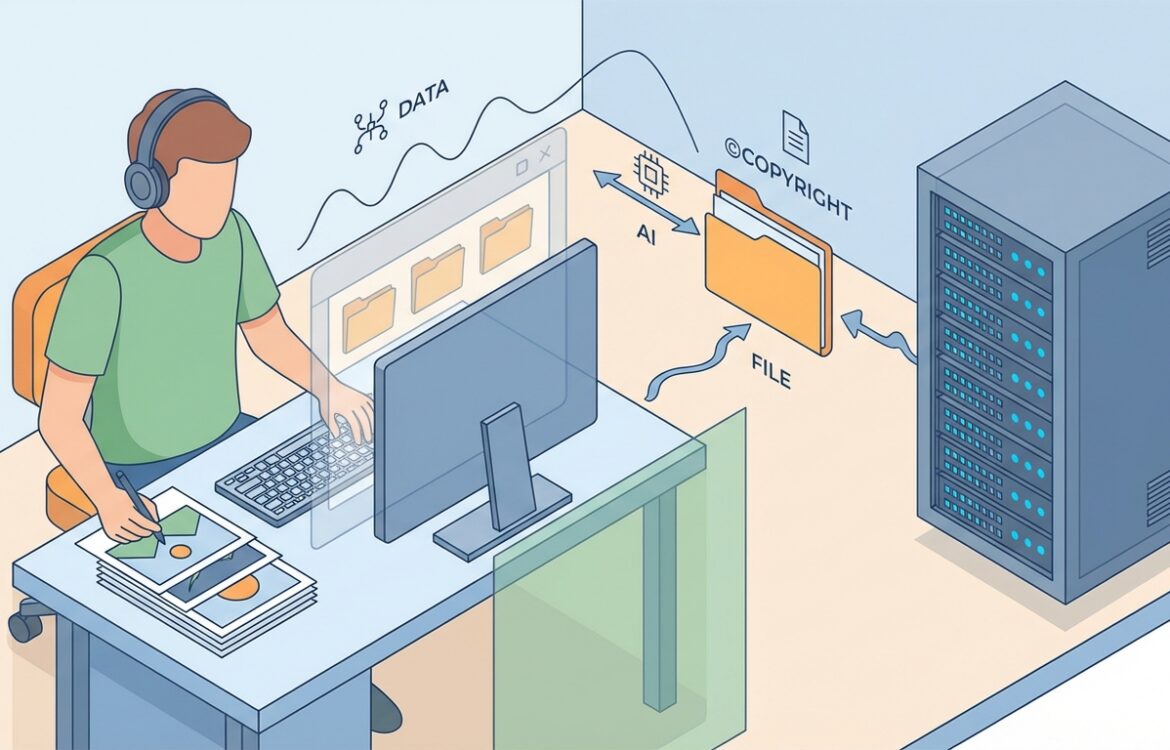

Copyright and AI: What Creators Should Know — A Practical Guide for Creators

An evidence‑based overview for creators on how copyright intersects with generative AI. Covers definitions, major regulatory developments and cases in the US, EU and UK, practical steps creators and small teams can take to reduce legal and reputational risk, common misconceptions, and open questions that may change how works are used to train and deploy AI.

Securing LLM Apps: Practical Threat Modeling for RAG, Fine‑Tuning, and Deployment

A practical, implementation‑focused guide to threat modeling Large Language Model (LLM) applications. Covers attack classes (prompt injection, model extraction, data poisoning), RAG/vector DB considerations, fine‑tuning risks, mitigations (access control, DP, monitoring), testing and red‑teaming, and common implementation mistakes—grounded in published research, vendor guidance, and standards.

Archives

Calendar

| M | T | W | T | F | S | S |

|---|---|---|---|---|---|---|

| 1 | ||||||

| 2 | 3 | 4 | 5 | 6 | 7 | 8 |

| 9 | 10 | 11 | 12 | 13 | 14 | 15 |

| 16 | 17 | 18 | 19 | 20 | 21 | 22 |

| 23 | 24 | 25 | 26 | 27 | 28 | |