Author: Oliver Grant

LLMOps: Evaluation, Monitoring, and QA — Practical Guide for Engineering Reliable LLM Systems

A technical, evidence-based guide to LLMOps: Evaluation, Monitoring, and QA. Covers evaluation frameworks, production monitoring (tracing, embedding drift, hallucination detection), red‑teaming and QA workflows, design trade‑offs, common implementation mistakes, and runnable practices with references to OpenAI Evals, lm-eval, BEIR, LangSmith, Arize, and whylogs.

Career Moats in the AI Era: Building Durable Advantage with RAG, Fine‑Tuning, Evaluation, Tooling, and Infrastructure

Practical guidance for AI engineers who want to create durable, technical career moats in the AI era. Covers what a career moat is, high-value technical specialties (RAG, PEFT, evaluation, monitoring, governance), concrete trade-offs, common implementation mistakes, and testing/observability practices with citations to official docs and research.

How to Monetize Data with AI: Practical Business Models, Costs, and a 6‑Month Execution Plan

A practical, evidence‑based guide for product managers, data leaders, and founders who want to monetize data with AI. Covers realistic business models, step‑by‑step execution, tooling and pricing ranges, compliance checkpoints, risks, and metrics to measure ROI. Includes citations to vendor pricing and regulatory guidance so you can build a defensible plan.

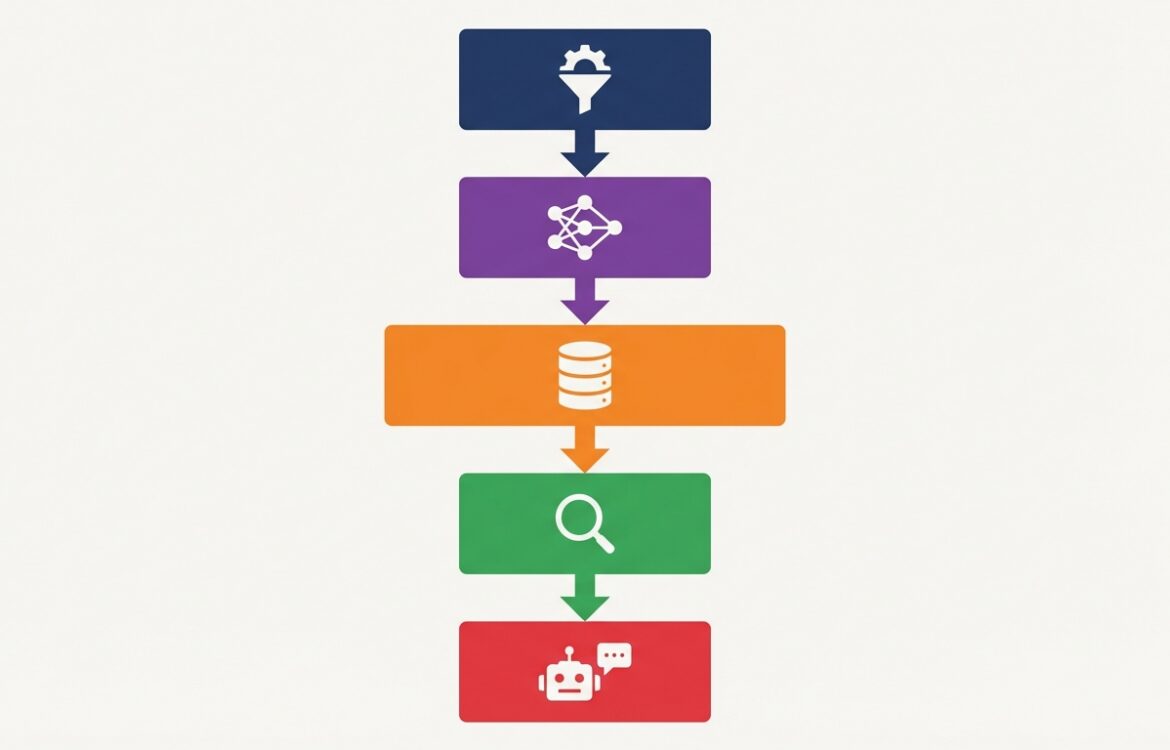

RAG in Production: A Practical Engineering Guide — Architecture, Trade-offs, and Operational Checklist

A practical, implementation-focused guide for engineers deploying Retrieval-Augmented Generation (RAG) systems. Covers architectures, retriever choices, vector databases, indexing and update patterns, security (including prompt-injection), evaluation metrics, monitoring, and common mistakes—grounded in academic and engineering sources and annotated with production references.

Inference and Infrastructure: Cost and Performance — Practical trade‑offs for serving LLMs

A technical guide to inference and infrastructure cost and performance trade‑offs for LLM-based systems. Covers RAG vs fine‑tuning, quantization and offload, batching and concurrency, vector store economics, tooling (Triton, DeepSpeed, FlexGen), and monitoring best practices with concrete implementation considerations and sources.

Fine-Tuning LLMs: When, Why, and How — Practical Guide to Methods, Trade-offs, and Deployment

A technical, implementation-focused guide to Fine-Tuning LLMs: When, Why, and How. Covers supervised fine-tuning, parameter-efficient methods (LoRA/adapters/PEFT), RAG vs tuning trade-offs, dataset curation, evaluation practices, deployment considerations, security risks, and common implementation mistakes backed by official docs and primary research.

AI Automation Economics: Turning Savings into Revenue with Practical ROI Strategies

A pragmatic guide for product leaders, operators, and founders who want to convert AI-driven cost savings into measurable revenue or margin gains. This article explains business models, a step-by-step execution plan, realistic costs and timelines, compliance risks, and the metrics you must track to make “Savings as Revenue” work in production.

Engineering Agents: Tools, Memory, and Reliability — Practical Architectures and Trade-offs

A practical, evidence-based guide for engineering agents that use tools, long-term memory, and production reliability patterns. This article compares RAG and fine-tuning, describes memory architectures, tool orchestration and sandboxing, and gives testing and monitoring best practices grounded in papers and vendor docs.

Archives

Calendar

| M | T | W | T | F | S | S |

|---|---|---|---|---|---|---|

| 1 | ||||||

| 2 | 3 | 4 | 5 | 6 | 7 | 8 |

| 9 | 10 | 11 | 12 | 13 | 14 | 15 |

| 16 | 17 | 18 | 19 | 20 | 21 | 22 |

| 23 | 24 | 25 | 26 | 27 | 28 | |