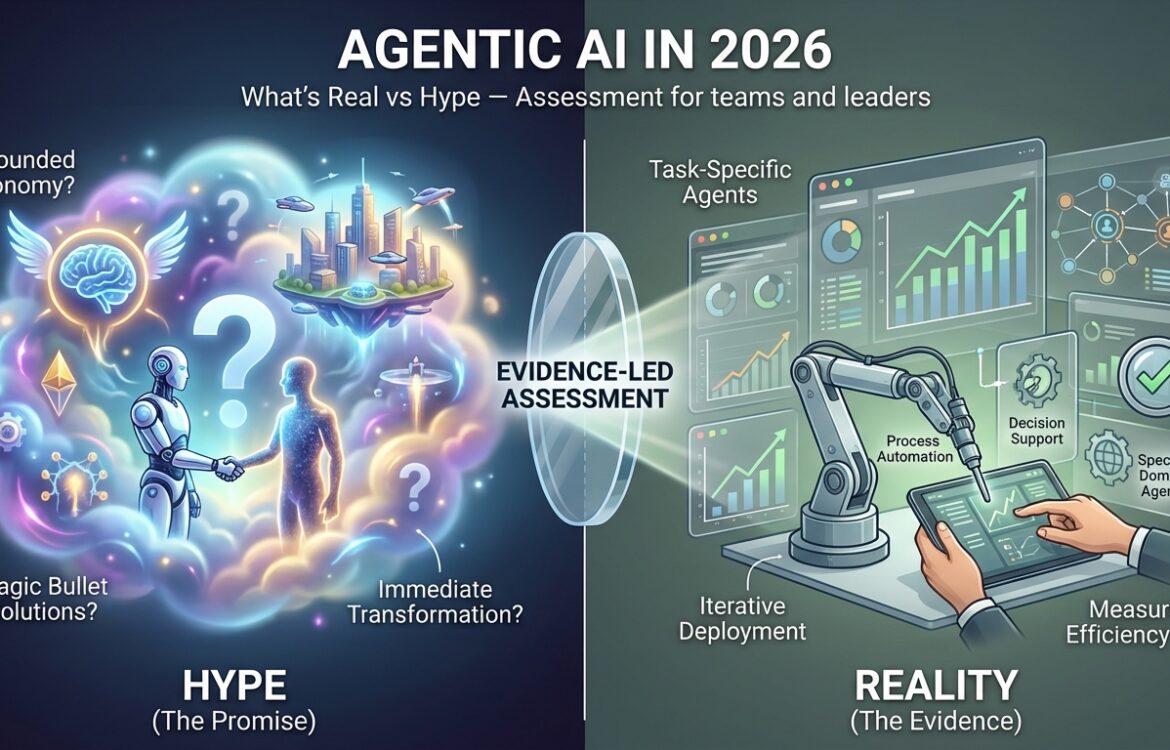

Agentic AI in 2026: What’s Real vs Hype — Evidence-led assessment for teams and leaders

This article examines Agentic AI in 2026: What’s Real vs Hype — focusing on which developments are documented today, which claims remain uncertain, and what measurable signals teams should track before deploying agentic systems. Throughout the article I separate verified signals (product launches, research papers, industry surveys) from open questions (robust alignment, long‑term governance, large‑scale safety), and I cite primary sources for key claims so readers can follow the evidence themselves. (microsoft.com)

What is happening now (verified signals)

Major cloud and software vendors have moved from experimental demos toward productizing agentic capabilities for enterprise workflows. Microsoft announced public preview paths and prebuilt agents in Copilot Studio and Microsoft 365 Copilot, positioning agents as task‑specific automations for sales, service, finance, and IT with dedicated controls for administrators. These product announcements are live and accompanied by vendor documentation and press coverage. (microsoft.com)

Vendor and community frameworks for building agents have matured. Open‑source and commercial stacks (LangChain / LangGraph and competing runtimes) now offer primitives for planning, tool invocation, state management, and observability — enabling production deployments that are more controllable than early proof‑of‑concept agents. LangChain’s ecosystem reports and multiple vendor guides document adoption and a path from prototypes to production. (langchain.com)

Market and analyst signals show growing enterprise interest and early deployments. Analyst firms and industry polls report increasing experimentation and forecasts for agent integration into business apps; Gartner and similar outlets have published forecasts and CIO polling indicating that a meaningful portion of organizations are piloting or planning task‑specific agents. These are market signals, not hard guarantees of success, but they reflect active enterprise investment. (gartner.com)

Academic and security research is rapidly responding. Recent papers explore alignment methods for agents (including variations on RLHF and constrained RL), the risks introduced when models move from “informative” to “agentic” behavior, and evaluation frameworks for agentic steerability and risk. This body of work documents both concrete mitigation approaches and existing failure modes. (arxiv.org)

Public conversations about privacy and safety are also visible in mainstream coverage. Privacy advocates and industry commentators have highlighted the risks that arise when agents need broad data access or persistent privileges to act on behalf of users; these debates appear in conference statements and news reporting. (businessinsider.com)

What’s driving the change

Several technical and business drivers explain why agentic systems are moving from lab experiments into product roadmaps:

- Tool integration and runtime orchestration: Modern agent frameworks let LLMs call external tools (APIs, databases, scripts) in planning–execute loops, enabling multi‑step workflows beyond single prompts. Tool integration and state management reduce brittle prompt engineering and make end‑to‑end automation feasible. (aiagenets.com)

- Productization by major platforms: Vendors such as Microsoft have integrated agents into flagship products (Copilot, Dynamics) and launched management controls aimed at enterprise governance — this commercial push accelerates real use cases. (microsoft.com)

- Operational demand: Routine, repetitive knowledge‑work tasks (ticket routing, document retrieval, simple decision trees) present clear ROI for narrow agents, and CIO surveys show organizations prioritizing these low‑risk productivity wins. Analyst reports capture that motivation. (gartner.com)

- Improved developer tooling and ecosystems: Open frameworks, libraries, and SDKs (LangChain, LangGraph, vendor SDKs) lower the cost of building agents and managing their lifecycle, making prototypes easier to convert to production systems. (langchain.com)

- Research on alignment and evaluation: The academic community is producing alignment techniques tailored to agents (constraint learning, adversarial games, multi‑agent evaluation), which both illuminate risks and provide practical mitigation templates used in industry proofs of concept. (arxiv.org)

Taken together, these drivers explain why agentic features are appearing in enterprise roadmaps: they answer concrete business needs while being enabled by a maturing stack of tools, research, and vendor investment. (langchain.com)

What experts and credible sources disagree about

Several important disagreements exist today, and distinguishing them is essential to avoid premature conclusions.

- How autonomous agents should be governed and monitored: Vendors emphasize administrative controls and “Copilot Control Systems” for governance, while security researchers warn that surface‑level controls are insufficient for nuanced, goal‑seeking agents that can chain actions across tools. The tension is between product controls and deeper runtime enforcement; both camps provide evidence for their positions. (microsoft.com)

- The scale and timing of enterprise adoption: Analyst forecasts vary. Some forecasts and vendor claims emphasize rapid uptake in 2025–2026 driven by packaged agents, while other surveys show a large share of organizations still evaluating and experimenting without broad production use. These differences stem from methodology (vendor signal vs independent survey) and from how “agent deployment” is defined. Treat these numbers as directional, not deterministic. (gartner.com)

- Security and safety maturity: Researchers demonstrate concrete attack modes (prompt injection, data exfiltration, misuse of tool access) and propose mitigation frameworks, but practitioners disagree on whether current guardrails are sufficient for broad agentic deployment. Academic evaluation frameworks show measurable improvements with new techniques, while security incident reporting stresses remaining gaps — both are supported by published work and threat analyses. (fusionchat.ai)

- Long‑term risk framing: Public figures and some researchers highlight existential or systemic risks if agents scale unchecked; others argue near‑term operational safety (privacy, abuse, economic impact) is the immediate concern. These are different risk horizons rather than strictly opposing technical claims, and the literature supports attention to both time scales. (businessinsider.com)

When sources disagree, the evidence tends to cluster by scope: product teams point to feature roadmaps and pilot results; researchers publish controlled evaluations and attack models; threat intelligence documents real incidents. The appropriate response is to map claims to evidence types and avoid transferring vendor optimism into certainty about broad capability or safety. (microsoft.com)

Practical implications (for teams, creators, or users)

Deciding whether—or how—to adopt agentic systems requires risk‑aware, capability‑aware choices. Below are practical, evidence‑based recommendations for different roles.

- For engineering and product teams: Start with narrowly scoped, well‑instrumented agents whose access to tools and data is tightly constrained. Use RAG (retrieval‑augmented generation) patterns and least‑privilege tool access to reduce data exposure. Monitor chain‑of‑thought and tool invocations in logs and set out-of-band approvals for actions with high consequence. Frameworks and vendor guidance document these patterns. (aiagenets.com)

- For security, privacy, and compliance teams: Treat agentic deployments as a new attack surface: enforce MFA, secrets management, prompt sanitization, and prompt‑injection detection; log and retain invocation records for audits; and classify agents by risk level (low, medium, high) with different assurance requirements. Security research and incident analysis emphasize prompt injection and exfiltration as top risks. (fusionchat.ai)

- For business leaders and product owners: Prioritize high‑value, low‑risk use cases (administrative automations, internal knowledge retrieval, templated workflows) and measure outcomes empirically before expanding scope. Analyst polling shows many organizations find value in narrow agents first; keep expectations aligned with those measured outcomes. (gartner.com)

- For creators and developers building agents: Use community‑tested libraries and playbooks that include guardrails, and incorporate adversarial testing into CI (simulated prompt injection, role‑play misuse scenarios). Academic work offers concrete alignment and constraint learning techniques that can be adapted to engineering workflows. (arxiv.org)

In short: agentic systems are useful when they replace repeatable, well‑specified processes; they are risky when they need broad, unfettered access to critical systems or sensitive data. Instrumentation, least‑privilege design, and adversarial testing are not optional if you intend to scale agents in production. (langchain.com)

What to watch next (signals and metrics)

If you are monitoring whether agentic AI is moving from controlled pilots to safe, scalable production, track these signals and metrics:

- Deployment breadth and scope: Numbers of agents in production, percentage of apps embedding task‑specific agents, and the distribution of agent roles (internal admin vs customer‑facing). Analyst surveys and vendor reports provide periodic snapshots to compare against your own telemetry. (gartner.com)

- Failure modes and incident reports: Frequency and severity of prompt‑injection incidents, data exfiltration events, or unauthorized actions triggered by agents. Track whether incident types decrease after introducing mitigation controls and whether new attack modes emerge. Security vendor reporting and threat‑lab summaries are primary sources for these signals. (securonix.com)

- Standardization and regulation: Publication of industry standards, audit frameworks for agentic behavior, and regulatory guidance (e.g., EU AI Act interpretations) that clarify obligations for high‑risk systems. These legal and standards developments change compliance costs and deployment patterns. (gartner.com)

- Research benchmarks: Progress in alignment benchmarks, agentic robustness metrics (steerability, constraint satisfaction), and open datasets that measure multi‑step tool usage. New peer‑reviewed work is a leading indicator of whether technical gaps are closing. (arxiv.org)

- Vendor guardrail maturity: Presence of hardened runtime controls (sandboxing, tool permission layers, verifiable audit trails) in major agent platforms. Vendor blogs and release notes document these capabilities as they ship. (microsoft.com)

Operational teams should instrument internal metrics that mirror these external signals: agent invocation rates, tool‑call patterns, failure/retry counts, escalation rates to human operators, and time‑to‑detect anomalous behavior. Improvements in these KPIs over time indicate safer scaling. (langchain.com)

This article is for informational purposes and does not constitute investment or business advice.

FAQ

Q: What exactly does “Agentic AI in 2026” mean for everyday software?

A: The phrase describes systems where LLMs (or other models) do more than answer prompts: they plan, choose tools or APIs, and execute multi‑step tasks with some degree of autonomy. In 2026 this typically means narrow, task‑specific agents embedded in apps (help desks, document assistants, IT automation) rather than general autonomous entities. Evidence comes from vendor product launches and developer frameworks that document these patterns. (microsoft.com)

Q: Are current agentic AIs safe enough for customer‑facing financial or healthcare workflows?

A: Not without strong, evidence‑based controls. Security research and incident analyses highlight prompt injection and data exfiltration risks; compliance obligations (HIPAA, GDPR) require documented safeguards. Organizations deploying agents into regulated domains should adopt strict least‑privilege access, comprehensive auditing, and adversarial testing before any customer‑facing rollout. (fusionchat.ai)

Q: Will agentic AI replace knowledge workers in 2026?

A: Evidence does not support broad replacement in 2026. Most documented deployments target task augmentation (reducing repetitive work, improving retrieval) rather than full job replacement. Analyst surveys show strong interest in productivity gains but also emphasize human oversight and staged rollouts. (gartner.com)

Q: How can my team test an agent safely in production?

A: Follow a staged approach: define a narrow scope and acceptance criteria, sandbox external tool access, run adversarial tests (prompt injection, malicious responses), instrument logs and alerts, and require human approval for high‑impact actions. Use vendor control systems and open‑source frameworks that provide observability and permissioning. (microsoft.com)

Q: What metrics should executives ask for when evaluating agent pilots?

A: Request measurable outcomes (time saved, error rate improvement), safety KPIs (number of blocked actions, prompt‑injection attempts, unauthorized data access), adoption metrics (active users, task completion rate), and incident response metrics (MTTR for agent anomalies). Compare these to pre‑agent baselines and require periodic independent reviews. (langchain.com)

You may also like

I explore how AI is reshaping work, creativity, education, and decision-making, grounding every topic in evidence rather than hype. I write about real trade-offs—open vs closed models, compute costs, information quality, and organizational impact—so readers can understand what actually matters and what to watch next.

Archives

Calendar

| M | T | W | T | F | S | S |

|---|---|---|---|---|---|---|

| 1 | 2 | 3 | 4 | 5 | ||

| 6 | 7 | 8 | 9 | 10 | 11 | 12 |

| 13 | 14 | 15 | 16 | 17 | 18 | 19 |

| 20 | 21 | 22 | 23 | 24 | 25 | 26 |

| 27 | 28 | 29 | 30 | |||