Day: January 1, 2026

Designing Reliable Prompt Packs: 50 Prompts per Role — A Repeatable Prompt Engineering Workflow

A systems-first guide to creating, validating, and operating Prompt Packs: 50 Prompts per Role. Learn a principled prompt structure, a step-by-step workflow for building role-based prompt collections, and QA processes to detect failure modes, measure repeatability, and maintain prompt quality over time.

Agentic workflows: Designing multi-step systems for reliable AI operations

Practical guide to designing agentic workflows — multi-step, tool-enabled LLM systems that prioritize reliability, repeatability, and observable QA. Learn core principles, a recommended prompt structure, a step-by-step example workflow, and concrete failure modes with QA checks to make agentic systems production-ready.

Multimodal AI: The New Default Interface — Evidence, Drivers, and Practical Implications

A grounded, evidence-led examination of how multimodal AI is shifting from research curiosity to a practical interface layer. This article separates documented signals (product launches, benchmarks, standards activity) from areas of uncertainty, and translates implications for teams, creators, and users.

Automate Work with AI: Practical Recipes for Reliable Prompt Engineering and Workflows

A practical, systems-first guide to Automate Work with AI: Practical Recipes — methods for building reliable, repeatable prompt engineering and agent workflows, with step-by-step recipes, failure modes, and quality-control practices for production systems.

AI for Finance Teams: Faster, Clearer Analysis — A Practical, Step-by-Step Implementation Guide

A hands-on guide for finance leaders and analysts to implement AI for finance teams to accelerate reporting, forecasting, and reconciliation. Learn a step-by-step workflow, required tools and data controls, validation and model-risk checkpoints, common pitfalls, and practical examples you can apply in the next 30–90 days.

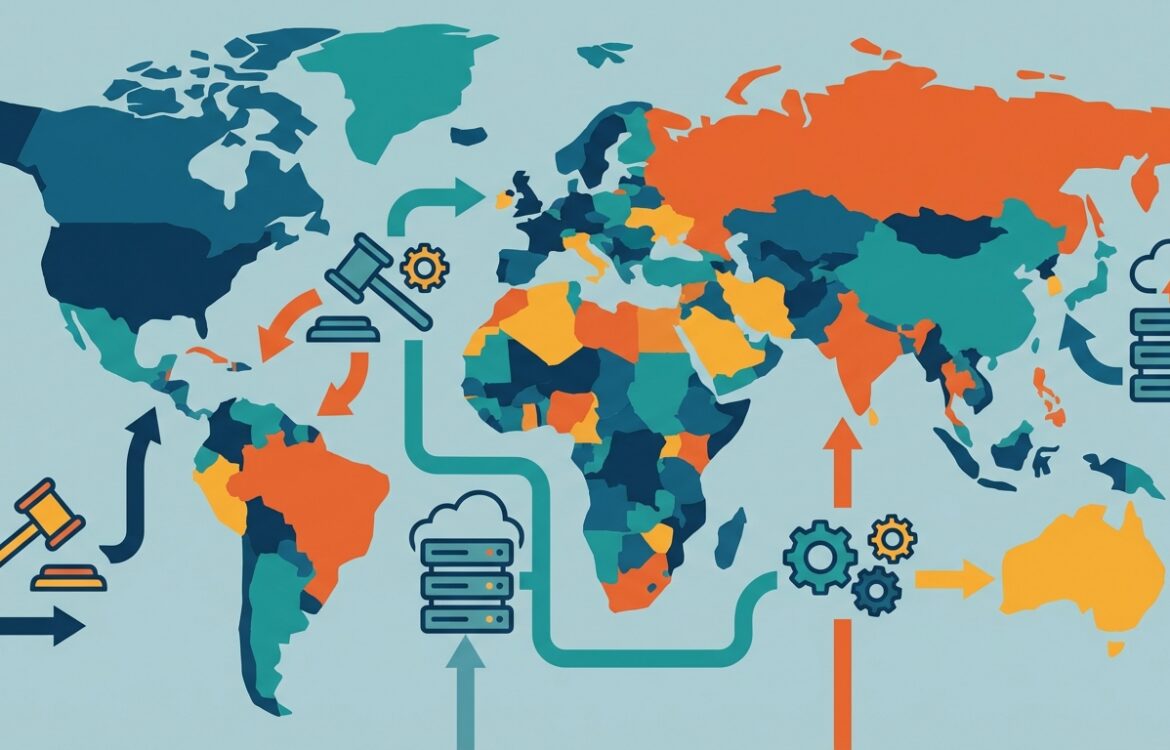

AI governance trends: 2026 and beyond — an evidence-led outlook on regulation, standards, and operational risk

This analytical review examines AI governance trends for 2026 and beyond, separating verified developments (regulatory timelines, standards work, government guidance) from areas of uncertainty (enforcement detail, geopolitical fragmentation). It synthesizes official sources and high-trust reporting to help teams, creators, and policymakers prepare for near-term governance shifts.